- The world ' s most popular open-source Node.js library for web scraping and browser automation.

- Scraper API is a proxy API for web Scraping; It handles proxies, browsers,.

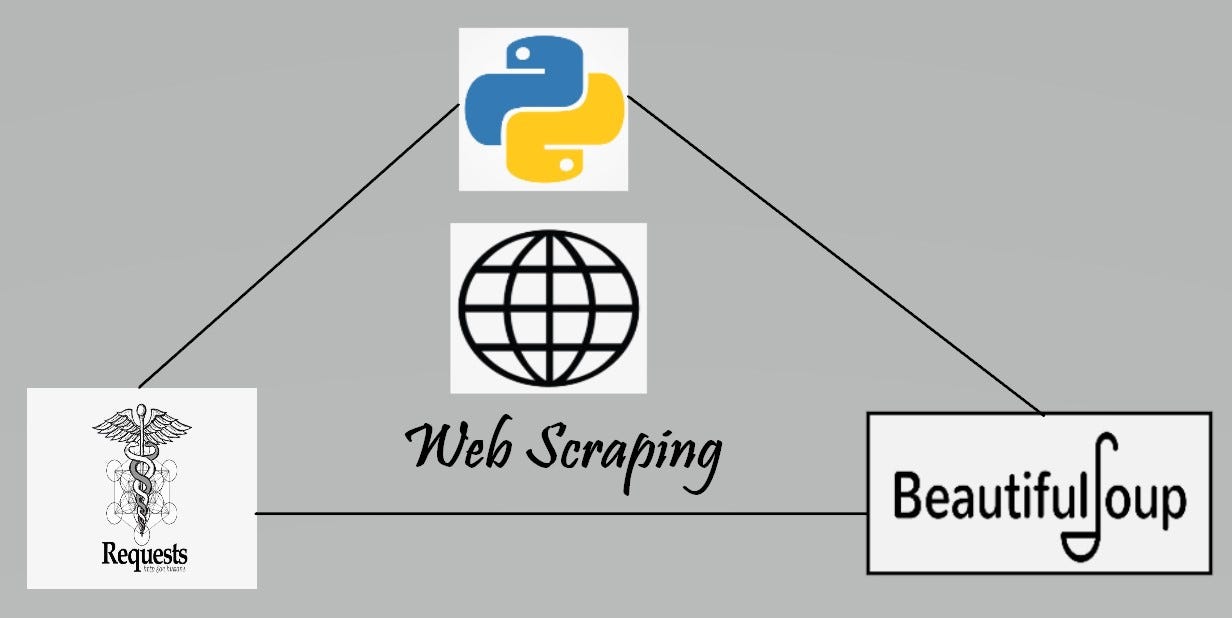

Javascript is a widely-used programming language and an ever-increasing number of websites use JavaScript to fetch and render user content. While there are various tools available for web scraping, a growing number of people are exploring Javascript web scraping tools.

To carry out your web scraping projects, you need to familiarize yourself with web scraping tools to choose the right one. We will walk through open source Javascript tools and frameworks that are great for web crawling, web scraping, parsing, and extracting data.

Apify SDK — The scalable web scraping and crawling library for JavaScript/Node.js. Enables development of data extraction and web automation jobs (not only) with headless Chrome and Puppeteer. Php Curl Class ⭐ 2,777 PHP Curl Class makes it easy to send HTTP requests and integrate with web APIs Selenium Python Helium ⭐ 2,461. Request is an open-source web scraping library written by Kenneth Reitz.

Open Source Javascript Web Scraping Tools and Frameworks

| Features/Tools | Github Stars | Github Forks | Github Open Issues | Last Updated | Documentation | License |

|---|---|---|---|---|---|---|

| Apify SDK | 22K | 1.4K | 216 | June 2020 | Excellent | MIT |

| NodeCrawler | 5.4K | 828 | 23 | Nov 2015 | Good | MIT |

| Puppeteer | 62K | 6.4K | 1,039 | June 2020 | Excellent | Apache License 2.0 |

| Playwright | 13.3K | 402 | 115 | May 2020 | Good | Apache License 2.0 |

| Node SimpleCrawler | 2K | 344 | 51 | April 2020 | Good | BSD 2-Clause |

| PJScrape | 1K | 175 | 28 | Oct 2011 | Poor | MIT |

| Cheerio | 22K | 1.4K | 216 | April 2020 | Good | MIT |

Note: All details in the table above are current at the time of writing this article.

Apify SDK

Apify SDK is a Node.js library which is a lot like Scrapy positioning itself as a universal web scraping library in JavaScript, with support for Puppeteer, Cheerio, and more. With its unique features like RequestQueue and AutoscaledPool, you can start with several URLs and then recursively follow links to other pages and can run the scraping tasks at the maximum capacity of the system respectively.

Requirements – The Apify SDK requires Node.js 10.17 or later

Available Selectors – CSS

Available Data Formats – JSON, JSONL, CSV, XML, Excel or HTML

Pros

- Supports any type of website

- Best library for web crawling in Javascript we have tried so far.

- Built-in support for Puppeteer and Cheerio

Installation

Add Apify SDK to any Node.js project by running:

Best Use Case

Apify SDK is a preferred tool when other solutions fall flat during heavier tasks – performing deep crawls, rotating proxies to mask the browser, scheduling the scraper to run multiple times, caching results to prevent data prevention if the code happens to crash, and more. Apify handles such operations with ease but it can also help to develop web scrapers of your own in Javascript.

Node SimpleCrawler

Simplecrawler is designed to provide a basic, flexible, and robust API for crawling websites. It was written to archive, analyze, and search some very large websites and can get through hundreds of thousands of pages and write large volumes of data without issue. It has a lot of useful events that can help you track the progress of your crawling process. This crawler is extremely configurable and there’s a long list of settings you can change to adapt it to your specific needs.

Requirements – Node.js 8.0+

Pros

- Respects robot.txt rules

- Highly configurable

- Easy setup and installation

Cons

- Does not download the response body when it encounters an HTTP error status in the response

- No promise support

- May get invalid URLs because of its brute force approach

Installation

To install simplecrawler type the command:

Best Use Case

If you need to start off with a flexible and configurable base for writing your own crawler

NodeCrawler

Nodecrawler is a popular web crawler for NodeJS, making it a very fast crawling solution. If you prefer coding in JavaScript, or you are dealing with mostly a Javascript project, Nodecrawler will be the most suitable web crawler to use. Its installation is pretty simple too. JSDOM and Cheerio (used for HTML parsing) use it for server-side rendering, with JSDOM being more robust.

Requires Version – Node v4.0.0 or greater

Available Selectors – CSS, XPath

Available Data Formats – CSV, JSON, XML

Pros

- Easy installation

Cons

- It has no Promise support

Installation

To install this package with npm:

Best Use Case

If you need a lightweight web crawler that combines efficiency and convenience.

PJScrape

PJscrape is a web scraping framework written in Python using Javascript and JQuery. It is built to run with PhantomJS, so it allows you to scrape pages in a fully rendered, Javascript-enabled context from the command line, with no browser required. The scraper functions are evaluated in a full browser context. This means you not only have access to the DOM, but you also have access to Javascript variables and functions, AJAX-loaded content, etc.

Requires Version – Node v4.0.0+, PhantomJS v.1.3+

Available Selectors – CSS

Available Data Format – JSON

Pros

- Easy installation and setup for more than one scraper

- Suitable for recursive crawling

Cons

- Poor documentation

Installation

To install this package with npm:

Best Use Case

If you need a web scraping tool in Javascript and JQuery

Puppeteer

Puppeteer is a Node library which provides a powerful but simple API that allows you to control Google’s headless Chrome browser. A headless browser means you have a browser that can send and receive requests but has no GUI. It works in the background, performing actions as instructed by an API. You can truly simulate the user experience, typing where they type and clicking where they click.

A headless browser is a great tool for automated testing and server environments where you don’t need a visible UI shell. For example, you may want to run some tests against a real web page, create a PDF of it, or just inspect how the browser renders a URL. Puppeteer can also be used to take screenshots of web pages visible by default when you open a web browser.

Puppeteer’s API is very similar to Selenium WebDriver, but works only with Google Chrome. Puppeteer has a more active support than Selenium, so if you are working with Chrome, Puppeteer is your best option for web scraping.

Requires Version – Node v6.4.0, Node v7.6.0 or greater

Available Selectors – CSS

Available Data Formats – JSON

Pros

- With its full-featured API, it covers a majority of use cases

- The best option for scraping Javascript websites on Chrome

Cons

- Only available for Chrome/Chromium browser

- Supports only JSON format

Installation

To install Puppeteer in your project run:

This will install Puppeteer and download the recent version of Chromium browser to run the puppeteer code. By default, puppeteer works with the Chromium browser but you can also use Chrome. You can also use the lightweight version of Puppeteer – puppeteer core. To install type the command:

Best Use Case

- If you need to test the speed, performance, responsivenes, and UI of a website.

- If you are using Chrome, Puppeteer is your best option for web scraping.

- If the information you want is generated using

Playwright

Playwright is a Node library to automate multiple browsers with a single API. It enables cross-browser web automation that is ever-green, capable, reliable, and fast. Playwright was created to improve automated UI testing by eliminating flakiness, improving the speed of execution, and offering insights into the browser operation.

Playwright is very similar to Puppeteer in many respects. The API methods are identical in most cases, and Playwright also bundles compatible browsers by default. Playwright’s biggest differentiating point is cross-browser support. It can drive Chromium, WebKit, MS Edge, and Firefox.

A noteworthy difference is that Playwright has a more powerful browser context feature than Puppeteer. This lets you simulate multiple devices with a single browser instance.

Rpa Web Scraping Open Source

Requires Version – Node.js 10.15 or above.

Available Selectors – CSS

Available Data Formats – JSON

Pros

- Cross Browser support

- Detailed documentation

Con

- They have only patched the WebKit and Firefox debugging protocols, not the actual rendering engine

Installation

To install the package:

This installs Playwright and browser binaries for Chromium, Firefox, and WebKit. Once installed, you can use Playwright in a Node.js script and automate web browser interactions.

Best use case

If you need an efficient tool as good as Puppeteer to perform UI testing but across multiple browsers, you should use Playwright.

Cheerio

Cheerio is a library that parses raw HTML and XML documents and allows you to use the syntax of jQuery while working with the downloaded data. With Cheerio, you can write filter functions to fine-tune which data you want from your selectors. If you are writing a web scraper in JavaScript, Cheerio API is a fast option that makes parsing, manipulating, and rendering efficient.

It does not – interpret the result as a web browser, produce a visual rendering, apply CSS, load external resources, or execute JavaScript. If you require any of these features, you should consider projects like PhantomJS or JSDom.

Requirements – Up to date versions of Node.js and npm

Available Selectors – CSS

Pros

- Parsing, rendering and manipulating documents is very efficient

- Flexible, Easy to Use

- Very fast (Preliminary end to end benchmarks suggests its 8x faster than JSDOM)

Cons

- Does not fare well for dynamic Javascript websites

Installation

To install the required modules using NPM, simply type the following command:

Best Use Case

If you need speed, go for Cheerio.

These are just some of the open-source javascript web scraping tools and frameworks you can use for your web scraping projects. If you have greater scraping requirements or would like to scrape on a much larger scale it’s better to use web scraping services.

If you aren’t proficient with programming or your needs are complex, or you need large volumes of data to be scraped, there are great web scraping services that will suit your requirements to make the job easier for you.

You can save time and get clean, structured data by trying us out instead – we are a full-service provider that doesn’t require the use of any tools and all you get is clean data without any hassles.

We can help with your data or automation needs

Turn the Internet into meaningful, structured and usable data

The UI Vision RPA software is the tool for visual process automation, codeless UI test automation, web scraping and screen scraping. Automate tasks on Windows, Mac and Linux.

The UI Vision RPA core is open-source with enterprise security. The free and open-source browser extension can be extended with local apps for desktop UI automation.

Install it now: Get RPA for Chrome , Get RPA for Firefox, Get RPA for Edge.

The links go to the official Chrome, Firefox and Edge extension stores.

Web Scraping Tools Open Source

(1) Visual Web Automation and UI Testing

Scraping Web Data Using Excel

UI.Vision RPA's computer-vision visual UI testing commands allow you to write automated visual tests with UI.Vision RPA - this makes UI.Vision RPA the first and only Chrome and Firefox extension (and Selenium IDE) that has '👁👁 eyes'. A huge benefit of doing visual tests is that you are not just checking one element or two elements at a time, you’re checking a whole section or page in one visual assertion.

The visual UI testing and browser automation commands of UI.Vision RPA help web designers and developers to verify and validate the layout of websites and canvas elements. UI.Vision RPA can read and recognize images and text inside canvas elements, images and videos.

Web Scraping Open Source Tools

UI.Vision RPA can resize the browser's window in order to emulate various resolutions. This is particularly useful to test layouts on different browser resolutions, and to validate visually perfect mobile, web, and native apps.

(2) Visual Desktop Automation for Windows, Mac and Linux

UI.Vision RPA can not only see and automate everything inside the web browser. It uses image and text recognition technology (e. g. screen scraping) to automate your desktop as well (Robotic Process Automation, RPA). UI.Vision RPA’s eyes can read images and words on your desktop and UI.Vision RPA’s hands can click, move, drag & drop and type.

The desktop automation feature requires the installation of the freeware UI.Vision RPA Extension Modules (XModules). This is a separate software available for Windows, Mac and Linux. It adds the “eyes” and “hands” to UI.Vision RPA.

(3) Selenium IDE++ for hybrid web automation

The freeware RPA software includes standard Selenium IDE commands for general web automation, web testing, form filling & web scraping. But UI.Vision RPA has a different design philosophy then the classic Selenium IDE. It is a record & replay tool for automated testing just like the classic Selenium IDE, but even more it is a 'swiss army knife' for general web automation like Selenium IDE web scraping, automating file uploads and autofill form filling. So it has many features that the classic IDE does not (want to) have. For example, you can run your macros directly from the browser as bookmarks or even embed them on your website. If there’s an activity you have to do repeatedly, just create a web macro for it. The next time you need to do it, the entire macro will run at the click of a button and do the work for you.

This short screencast demos how to automate form filling on our online ocr website with UI.Vision RPA. We record the macro, insert a PAUSE (3 seconds) command manually and then replay the macro twice.

The UI.Vision RPA software is a open-source alternative to iMacros and Selenium IDE, and supports all important Selenium IDE commands. When you invest the time to learn UI.Vision RPA, you learn Selenium IDE at the same time.

In addition, the 'low-code' UI.Vision RPA solution includes new web automation commands that are not found in the classic Selenium IDE, such as the ability to write and read CSV files (data-driven testing), visual checks, file download automation, PDF testing and the ability to take full page and desktop screenshots.

Integrate RPA with your favorite tools and scripting language(s)

UI.Vision RPA has a an extensive command line API. This allows the UI.Vision RPA software to integrate with any application (e. g. Jenkins, Cucumber, CI/CD tools,...) and any programming or scripting language (e. g. C#, Python, Powershell,...). The API includes detailed error reporting for reliable non-stop RPA operation.

In other words, UI.Vision RPA can be remote controlled from any other scripting language via its command line API. And in the other direction, UI.Vision RPA itself can call other scripts and programs via its XRun command.

UI.Vision RPA software has Enterprise-Grade Security. Your data never leaves your machine.

With its strict open-source security approach, UI.Vision RPA is more secure than any other Robotic Process Automation (RPA) solution in the market: UI.Vision RPA and its XModules are designed to fit the highest security and data protection standards for Enterprise use. All processing is done locally on your machine. The XModules native apps only communicate with the open-source UI.Vision RPA browser extension, and never contact the Internet in any way. No other RPA tools vendor offers this level of transparency.

UI Vision RPA does not send any data back to us or any other place. You can easily verify this statement because all internet communication - like loading websites in your browser - is done inside the open-source UI.Vision RPA core. The fact that UI.Vision RPA is open-source under an official Open-Source license guarantees you the freedom to run, study, share and modify the software.

The only exception to the 'all data is processed locally' rule is the OCR screen scraping feature and that is why it is disabled by default. Only when you explicitly enable it on the OCR tab - and use an OCR command - does it send images of text to our OCR API cloud service for text recognition. A 100% local on-premise OCR option is available as part of our UI.Vision RPA Enterprise plans.

User Quotes.

I'm very impressed - in a few hours I was able to fully replicate a web app test setup that had taken a week or so to build in Visual Studio Gui testing.

Christian Berndt, Deloitte, France - More user quotes

We selected your automation testing framework for its focus on simplicity and easy maintenance. Your software is perfect for what we need.

Darren Myatt, Sony Europe, UK - More user quotes

We use UI.Vision to automate the process of extracting data files from our financial system. This used to be a manual process which now takes place automatically over night. UI.Vision made the automation of the procedure very easy.

Ian Brown, UK - More user quotes

RPA Software

Desktop Automation

RPA Forum

Selenium IDE

Codeless UI Testing

Web Scraping Open Source File